The Agent Compass (Part 1)

Agent means everything and nothing in today's GenAI landscape. Let's shed some light on this topic.

The concept of Agent is one of the vaguest out there in the post-ChatGPT landscape. The word has been used to identify systems that seem to have nothing in common with one another, from complex autonomous research systems down to a simple sequence of two predefined LLM calls. Even the distinction between Agents and techniques such as RAG and prompt engineering seems blurry at best.

Let’s try to shed some light on the topic by understanding just how much the term “AI Agent” covers and set some landmarks to better navigate the space.

Defining “Agent”

The problem starts with the definition of “agent”. For example, Wikipedia reports that a software agent is

a computer program that acts for a user or another program in a relationship of agency.

This definition is extremely high-level, to the point that it could be applied to systems ranging from ChatGPT to a thermostat. However, if we restrain our definition to “LLM-powered agents”, then it starts to mean something: an Agent is an LLM-powered application that is given some agency, which means that it can take actions to accomplish the goals set by its user. Here we see the difference between an agent and a simple chatbot, because a chatbot can only talk to a user. but don’t have the agency to take any action on their behalf. Instead, an Agent is a system you can effectively delegate tasks to.

In short, an LLM powered application can be called an Agent when

it can take decisions and choose to perform actions in order to achieve the goals set by the user.

Autonomous vs Conversational

On top of this definition there’s an additional distinction to take into account, normally brought up by the terms autonomous and conversational agents.

Autonomous Agents are applications that don’t use conversation as a tool to accomplish their goal. They can use several tools several times, but they won’t produce an answer for the user until their goal is accomplished in full. These agents normally interact with a single user, the one that set their goal, and the whole result of their operations might be a simple notification that the task is done. The fact that they can understand language is rather a feature that lets them receive the user’s task in natural language, understand it, and then to navigate the material they need to use (emails, webpages, etc).

An example of an autonomous agent is a virtual personal assistant: an app that can read through your emails and, for example, pays the bills for you when they’re due. This is a system that the user sets up with a few credentials and then works autonomously, without the user’s supervision, on the user’s own behalf, possibly without bothering them at all.

On the contrary, Conversational Agents use conversation as a tool, often their primary one. This doesn’t have to be a conversation with the person that set them off: it’s usually a conversation with another party, that may or may not be aware that they’re talking to an autonomous system. Naturally, they behave like agents only from the perspective of the user that assigned them the task, while in many cases they have very limited or no agency from the perspective of the users that holds the conversation with them.

An example of a conversational agent is a virtual salesman: an app that takes a list of potential clients and calls them one by one, trying to persuade them to buy. From the perspective of the clients receiving the call this bot is not an agent: it can perform no actions on their behalf, in fact it may not be able to perform actions at all other than talking to them. But from the perspective of the salesman the bots are agents, because they’re calling people for them, saving a lot of their time.

The distinction between these two categories is very blurry, and some systems may behave like both depending on the circumnstances. For example, an autonomous agent might become a conversational one if it’s configured to reschedule appointments for you by calling people, or to reply to your emails to automatically challenge parking fines, and so on. Alternatively, an LLM that asks you if it’s appropriate to use a tool before using it is behaving a bit like a conversational agent, because it’s using the chat to improve its odds of providing you a better result.

Degrees of agency

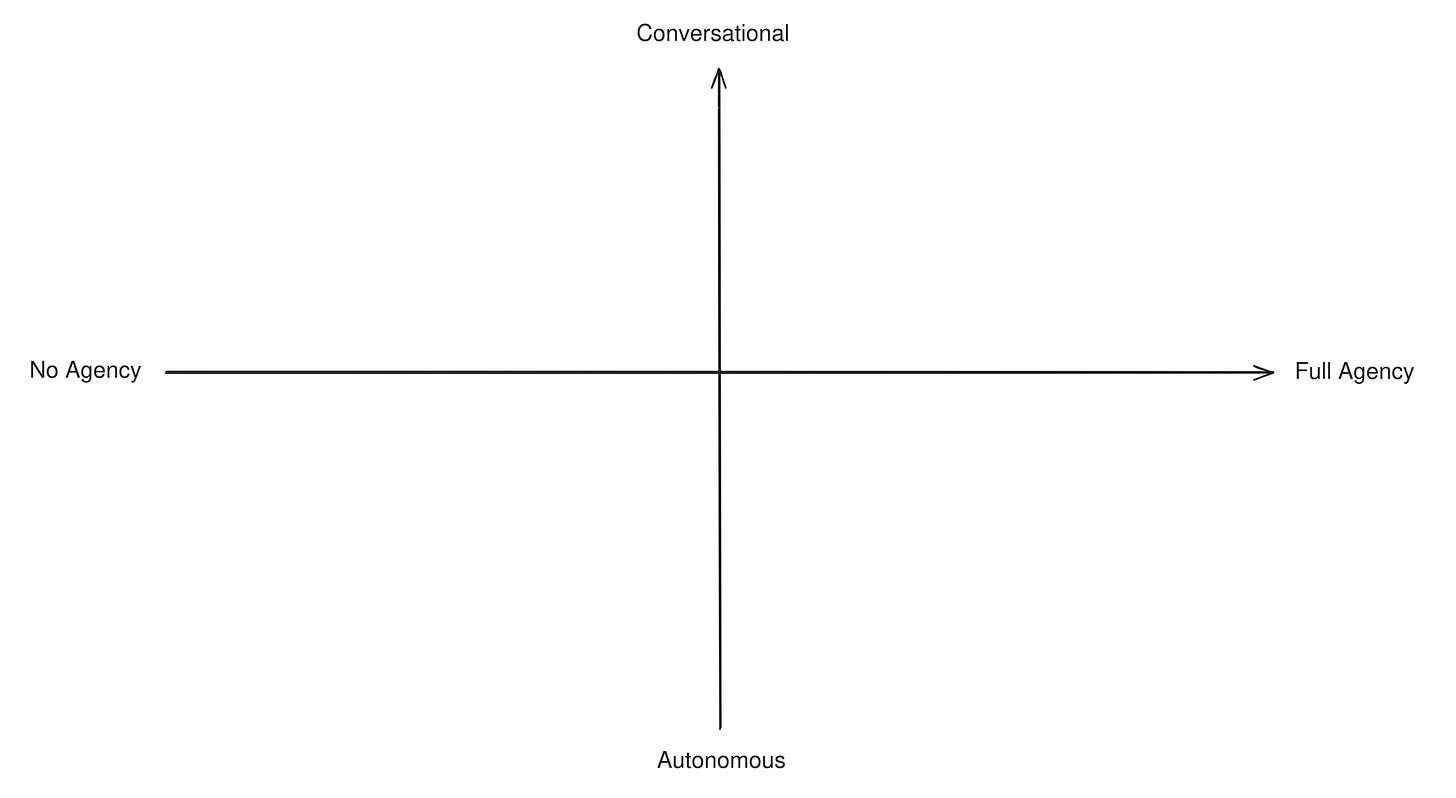

All the distinctions we made above are best understood as a continuous spectrum rather than hard categories. Various AI systems may have more or less agency and may be tuned towards a more “autonomous” or “conversational” behavior.

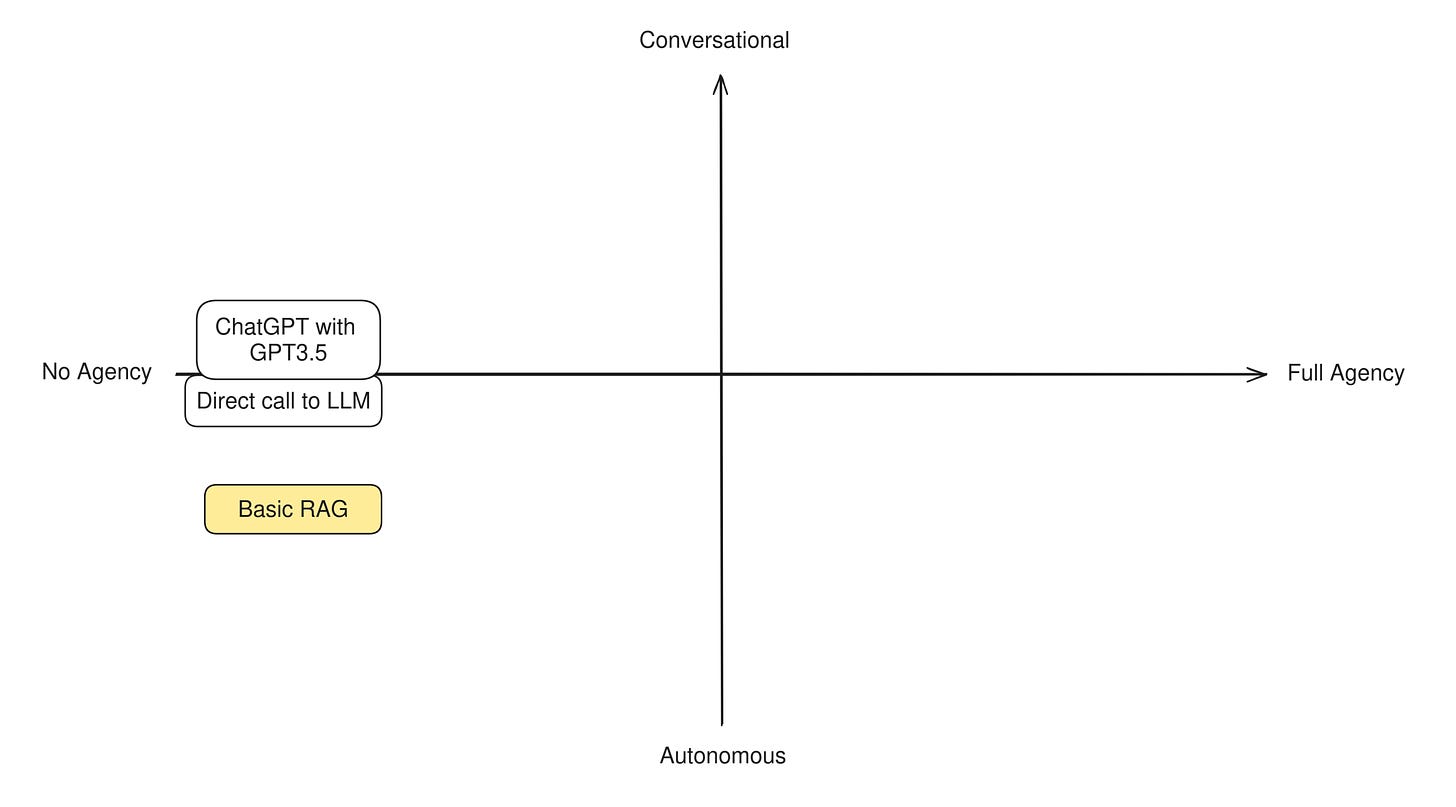

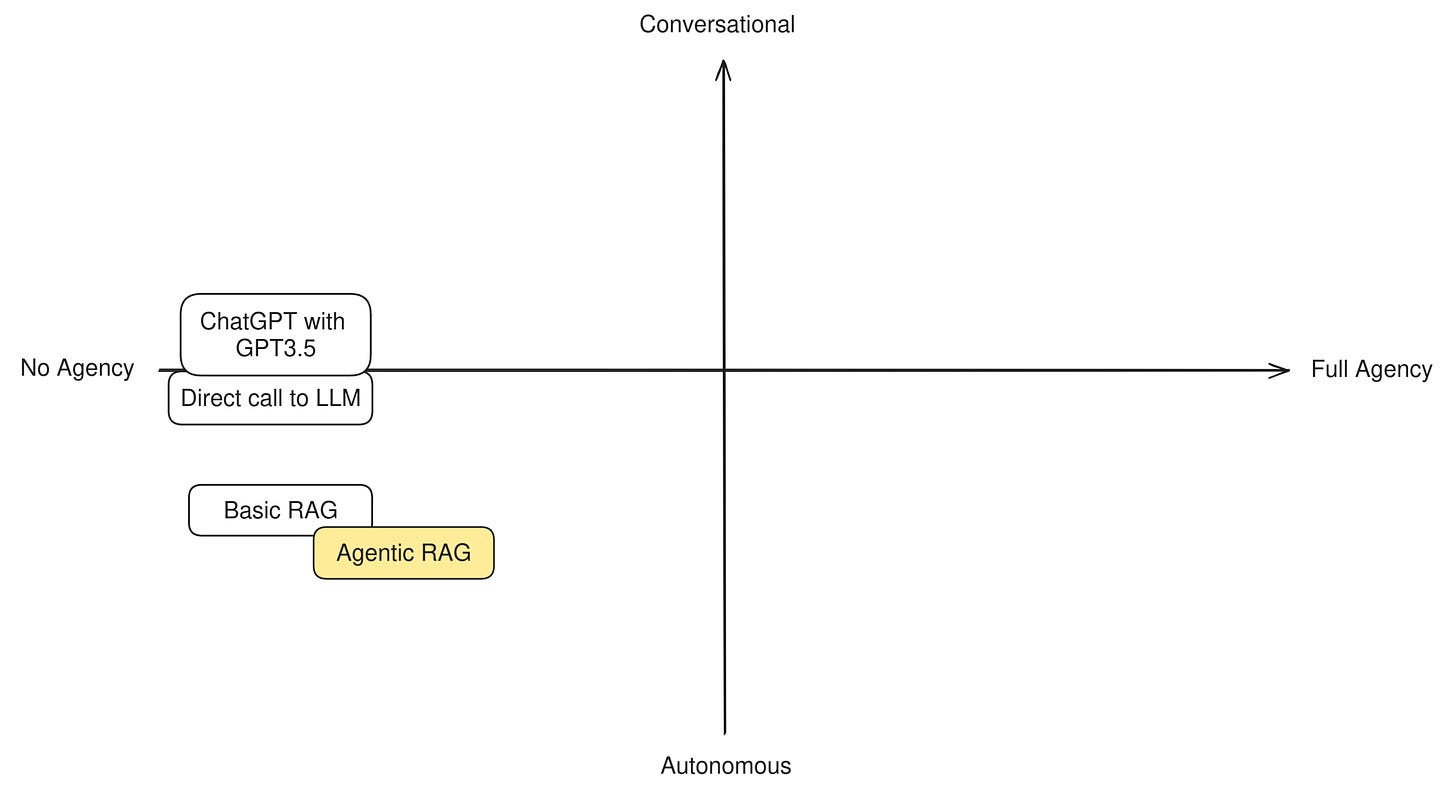

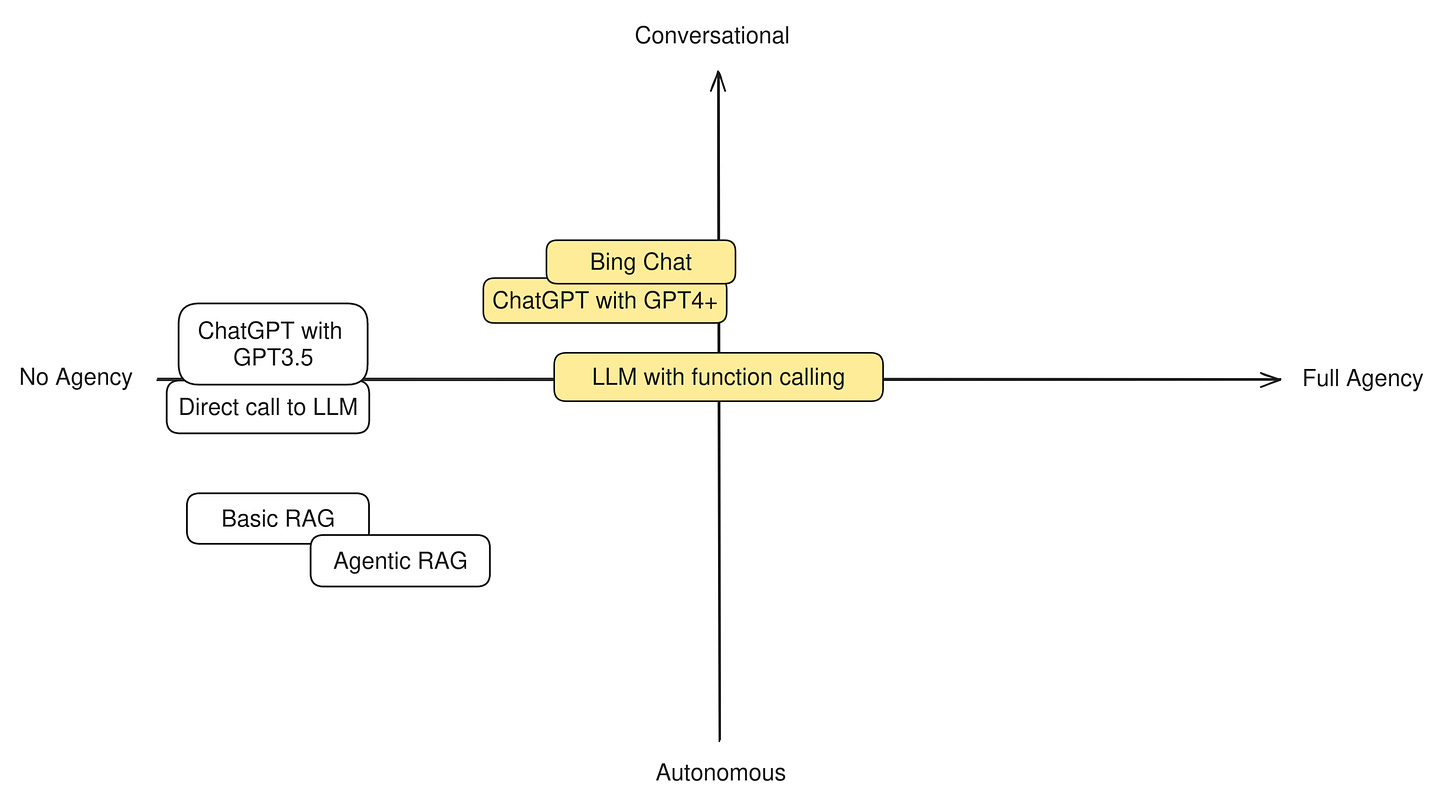

In order to understand this difference in practice, let’s try to categorize some well-known LLM techniques and apps to see how “agentic” they are. Having two axis to measure by, we can build a simple compass like this:

Our Agent compass

Bare LLMs

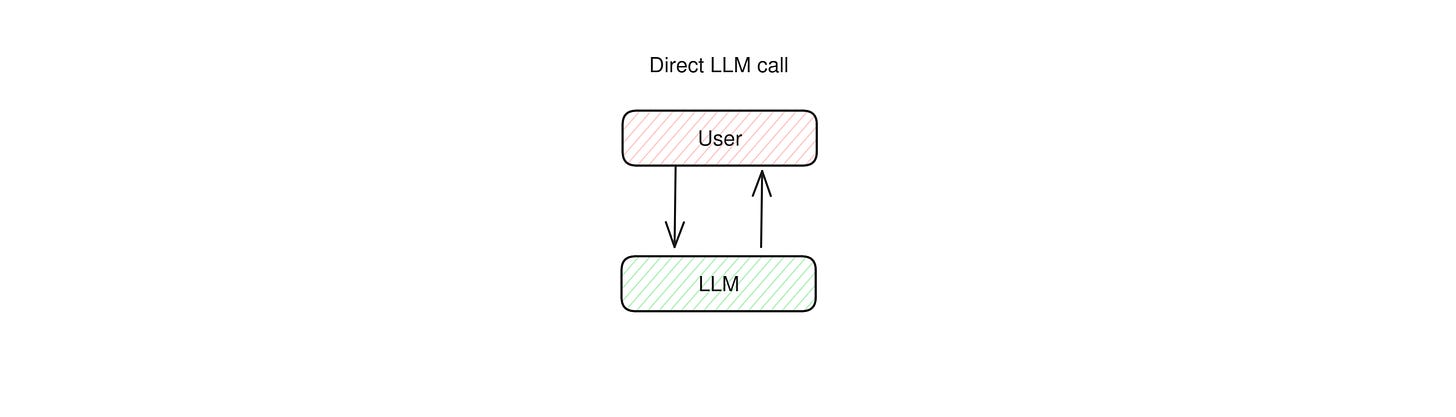

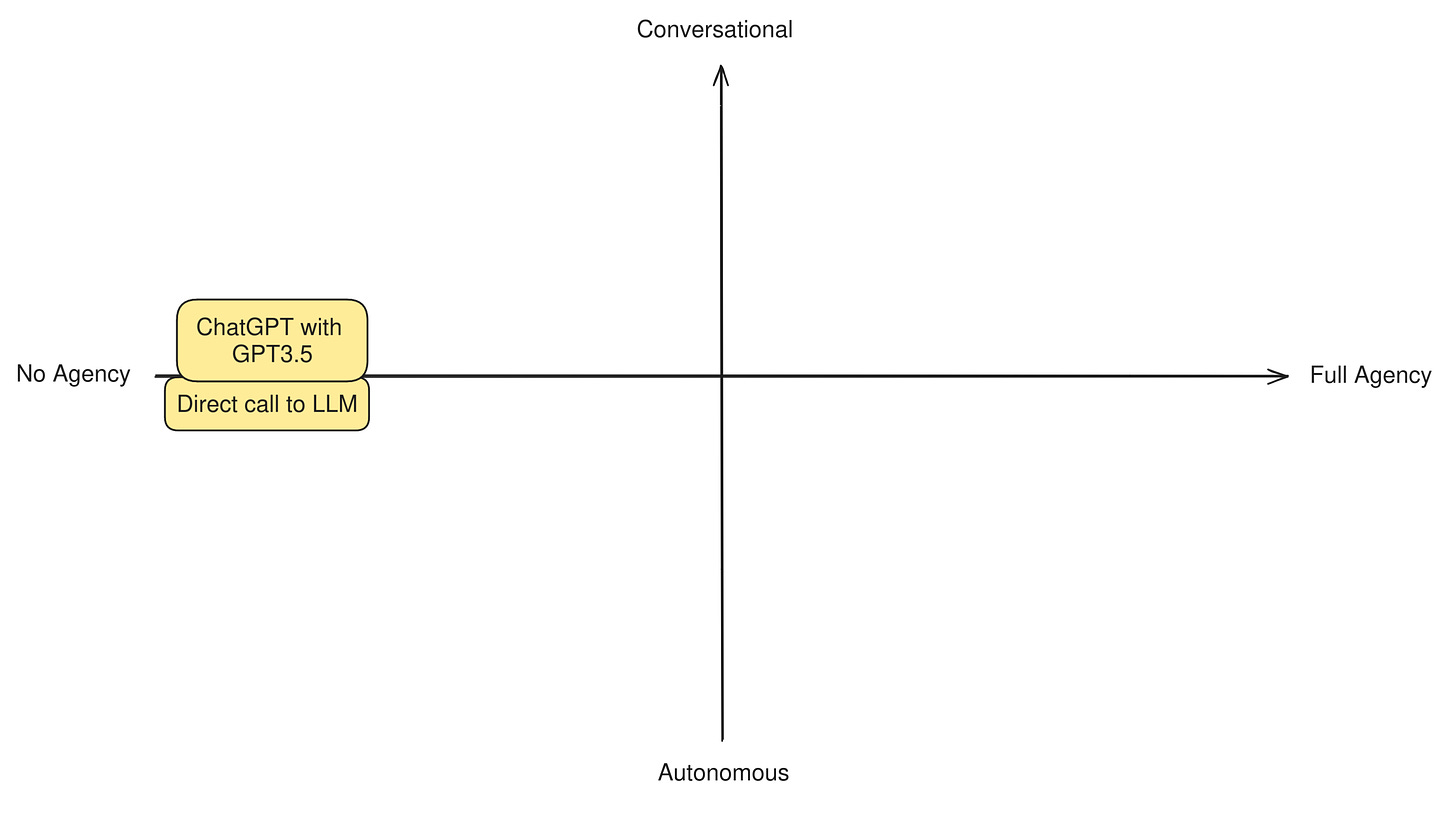

Many apps out there perform nothing more than direct calls to LLMs, such as ChatGPT’s free app and other similarly simple assistants and chatbots. There are no more components to this system other than the model itself and their mode of operation is very straightforward: a user asks a question to an LLM, and the LLM replies directly.

This systems are not designed with the intent of accomplishing a goal, and neither can take any actions on the user’s behalf. They focus on talking with a user in a reactive way and can do nothing else than talk back. An LLM on its own has no agency at all.

At this level it also makes very little sense to distinguish between autonomous or conversational agent behavior, because the entire app shows no degrees of autonomy. So we can place them at the very center-left of the diagram.

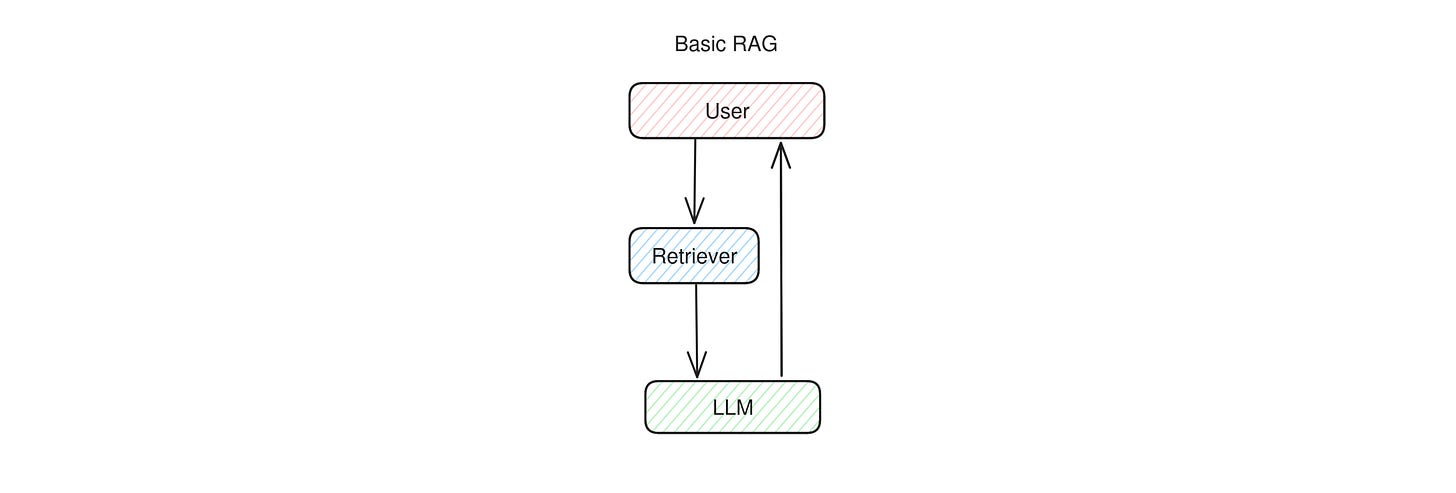

Basic RAG

Together with direct LLM calls and simple chatbots, basic RAG is also an example of an application that does not need any agency or goals to pursue in order to function. Simple RAG apps works in two stages: first the user question is sent to a retriever system, which fetches some additional data relevant to the question. Then, the question and the additional data is sent to the LLM to formulate an answer.

This means that simple RAG is not an agent: the LLM has no role in the retrieval step and simply reacts to the RAG prompt, doing little more than what a direct LLM call does. The LLM is given no agency, takes no decisions in order to accomplish its goals, and has no tools it can decide to use, or actions it can decide to take. It’s a fully pipelined, reactive system. However, we may rank basic RAG more on the autonomous side with respect to a direct LLM call, because there is one step that is done automonously (the retrieval).

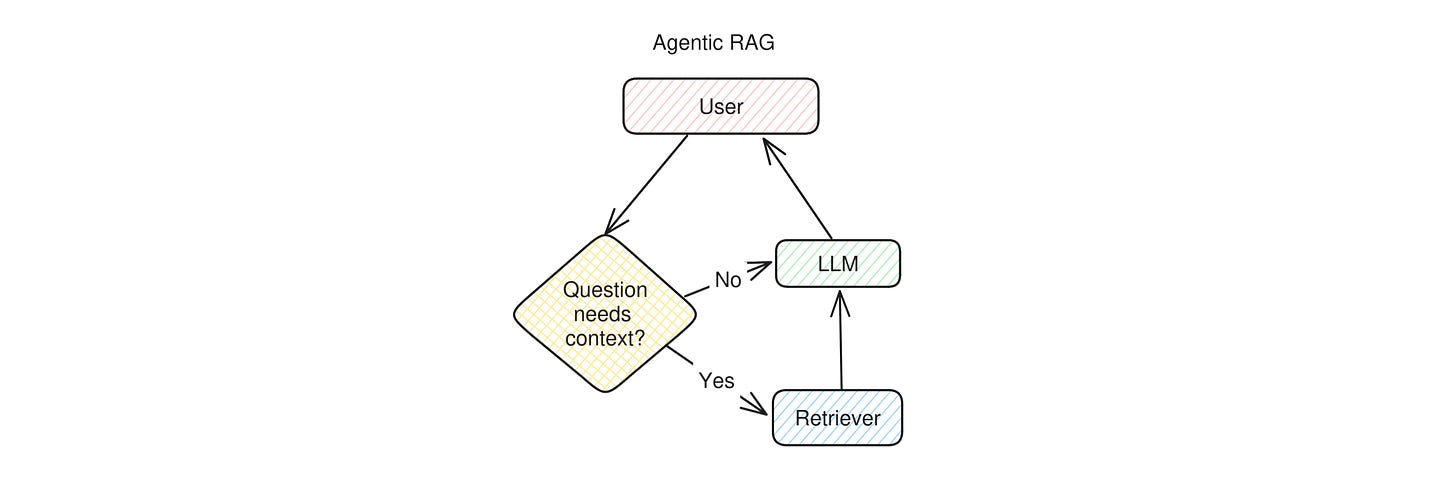

Agentic RAG

Agentic RAG is a slightly more advanced version of RAG that does not always perform the retrieval step. This helps the app produce better prompts for the LLM: for example, if the user is asking a question about trivia, retrieval is very important, while if they’re quizzing the LLM with some mathematical problem, retrieval might confuse the LLM by giving it examples of solutions to different puzzles, and therefore make hallucinations more likely.

This means that an agentic RAG app works as follows: when the user asks a question, before calling the retriever the app checks whether the retrieval step is necessary at all. Most of the time the preliminary check is done by an LLM as well, but in theory the same check coould be done by a properly trained classifier model. Once the check is done, if retrieval was necessary it is run, otherwise the app skips directly to the LLM, which then replies to the user.

You can see immediately that there’s a fundamental difference between this type of RAG and the basic pipelined form: the app needs to take a decision in order to accomplish the goal of answering the user. The goal is very limited (giving a correct answer to the user), and the decision very simple (use or not use a single tool), but this little bit of agency given to the LLM makes us place an application like this definitely more towards the Agent side of the diagram.

We keep Agentic RAG towards the Autonomous side because in the vast majority of cases the decision to invoke the retriever is kept hidden from the user.

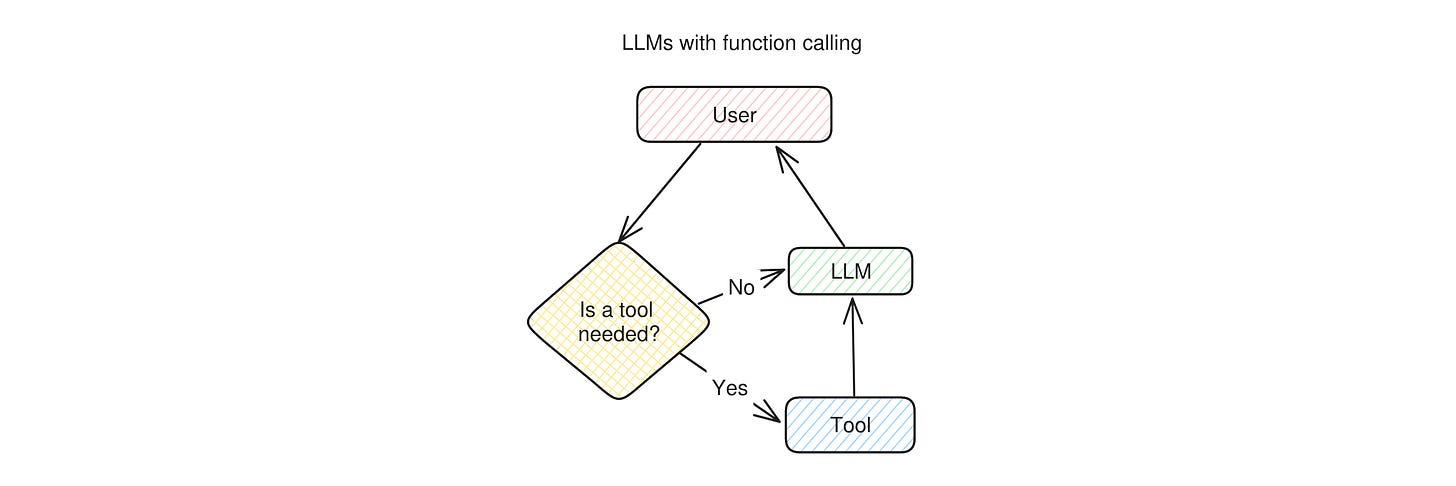

LLMs with function calling

Some LLM applications, such as ChatGPT with GPT4+ or Bing Chat, can make the LLM use some predefined tools: a web search, an image generator, and maybe a few more. The way they work is quite straightforward: when a user asks a question, the LLM first needs to decide whether it should use a tool to answer the question. If it decides that a tool is needed, it calls it, otherwise it skips directly to generating a reply, which is then sent back to the user.

You can see how this diagram resemble agentic RAG’s: before giving an answer to the user, the app needs to take a decision.

With respect to Agentic RAG this decision is a lot more complex: it’s not a simple yes/no decision, but it involves choosing which tool to use and also generate the input parameters for the selected tool that will provide the desired output. In many cases the tool’s output will be given to the LLM to be re-elaborated (such as the output of a web search), while in some other it can go directly to the user (like in the case of image generators). This all implies that more agency is given to the system and, therefore, it can be placed more clearly towards the Agent end of the scale.

We place LLMs with function calling in the middle between Conversational and Autonomous because the degree to which the user is aware of this decision can vary greatly between apps. For example, Bing Chat and ChatGPT normally notify the user that they’re going to use a tool when they do, and the user can instruct them to use them or not, so they’re slightly more conversational.

Continues in “The Agent Compass (Part 2)”